Practicals

These are the worksheets for the practicals of the “Engineering 2: Automated Software Engineering” module at the University of York.

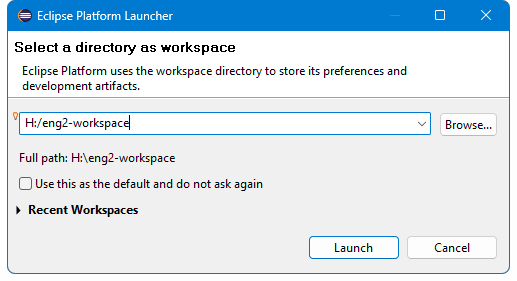

The practicals are divided into two parts:

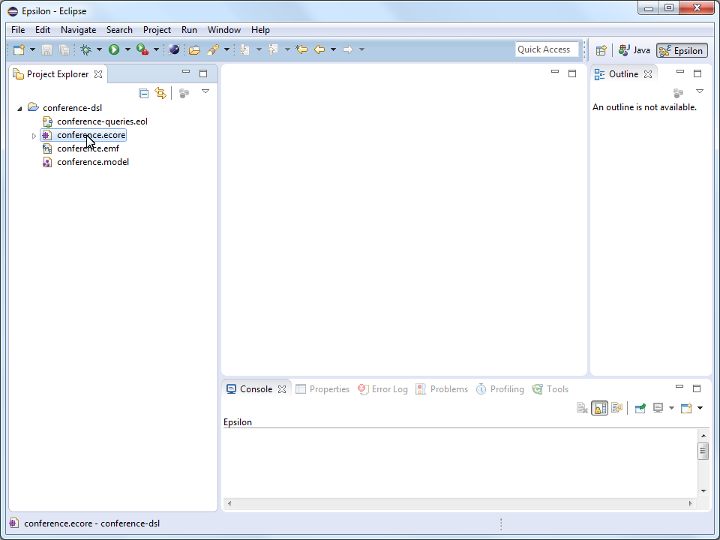

- Part 1 covers data-intensive systems with the Micronaut framework.

- Part 2 covers model-driven engineering within the Eclipse modelling ecosystem (EMF, Epsilon, and Sirius).

Part 1: data-intensive systems

- Micronaut basics

- ORM with Micronaut Data

- Integrating external services

- Reacting to events with Micronaut Kafka

- Deploying via container orchestration

Practical 1: Micronaut basics

This is the worksheet for the first practical in the Engineering 2 module.

In this practical, you will work through the basics of creating a microservice using Micronaut.

Work through every section of the practical in sequence, without missing steps. You can use the “Previous” and “Next” buttons on the left and right sides to quickly go between sections.

Introduction

In this practical, you will work through the basics of creating a microservice using Micronaut.

What you should already know

You should be able to:

- Create your own classes in the Java programming language.

- Be familiar with inheritance and implementing interfaces.

- Add annotations to classes, methods, parameters, and variables (e.g.

@Overrideon a method). - Use generics to parameterize types (e.g.

List<Integer>). - Use lists and maps from the Java Collections Framework.

- Write unit tests using JUnit.

If you need to read up on these concepts, consult the links in the Part 1 Java knowledge map in the VLE, and check the Learn Java section of the Dev.java website.

You should be familiar with these concepts from the lectures:

- The definition of software architecture as structure + architectural characteristics + decisions + design principles.

- The microservices architectural pattern.

- The REST principles and the 4 levels of the Richardson Maturity Model.

What you will learn

- How to create a new Micronaut project from scratch.

- How to import the project into IntelliJ.

- How to write controllers that handle HTTP requests in JSON format.

- How to produce a web-based interface to try out the controllers.

- How to write unit tests for the controllers.

What you will need

- Java 17 or newer: install from Adoptium.

- An IDE with Gradle and Java support: in this worksheet, we discuss IntelliJ IDEA.

What you will do

You will implement and test a minimal version of a microservice which manages a collection of books. The microservice will be able to create, retrieve, update, and delete books.

Starting your first project

Creating the project

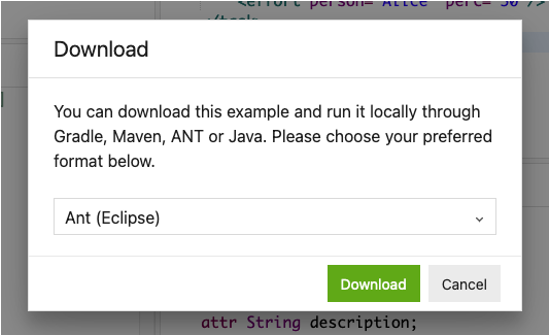

To create Micronaut projects, the fastest way is to use Micronaut Launch.

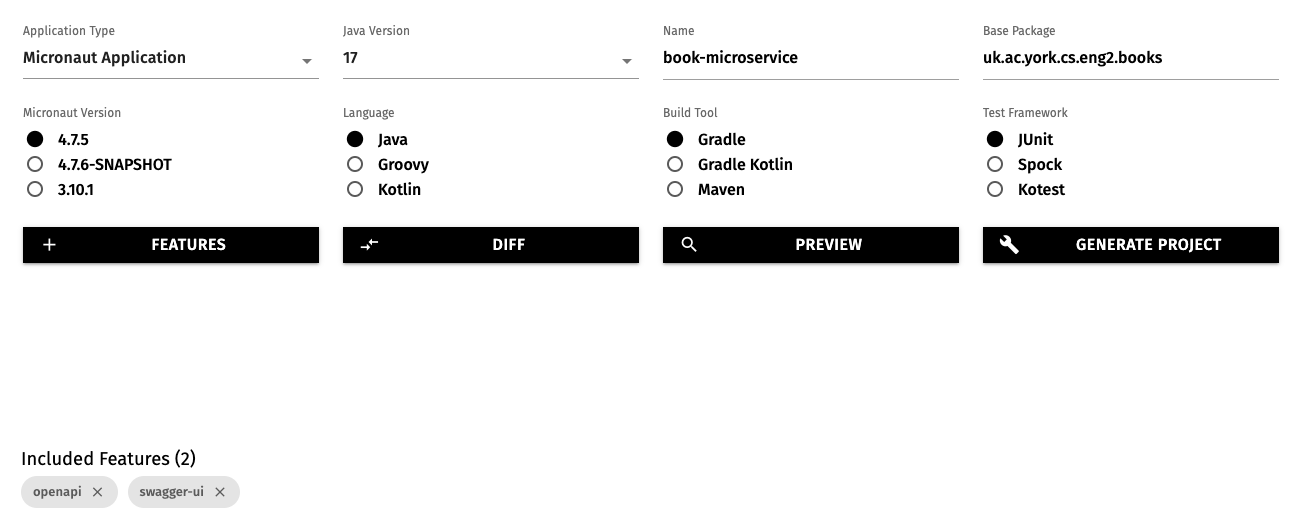

Open the above link in a new tab, and select these options:

- Application type: Micronaut Application

- Java version: 17

- Name:

book-microservice - Base package:

uk.ac.york.cs.eng2.books - Micronaut version: the latest non-SNAPSHOT 4.x version (4.7.4 as of writing)

- Language: Java

- Build tool: Gradle

- Test framework: JUnit

Click on the “Features” button, and add the openapi and swagger-ui features.

This will make Micronaut automatically produce a web-based user interface to try out our microservice.

The options will look like this:

Click on “Generate Project - Download Zip”, and unzip the produced ZIP file into a folder named book-microservice.

Importing the Micronaut project into IntelliJ

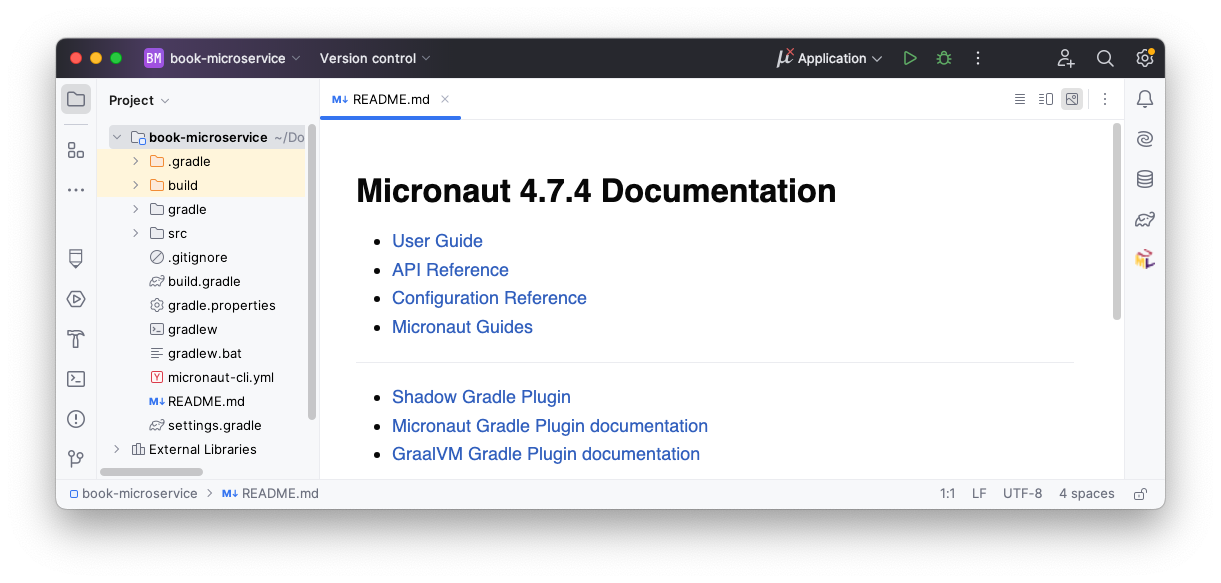

Open IntelliJ IDEA. If you have any projects open, close them with “File - Close Project”.

Select the “Projects” section on the left, and click on the “Open” button on the top right.

Select the book-microservice folder (the one containing the settings.gradle file produced by Micronaut Launch).

You may get a dialog from IntelliJ asking if you can trust the project: select “Trust Project”.

IntelliJ should now display your project, like this:

Setting the Java version in IntelliJ

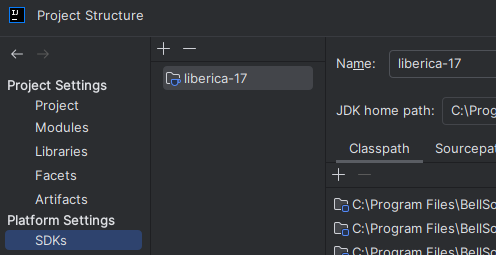

If your default Java installation is not Java 17, you will have to manually tell IntelliJ to use your Java 17 Development Kit.

First, go to “File - Project Structure…”, and in SDK ensure that you have picked a Java 17 installation, like this:

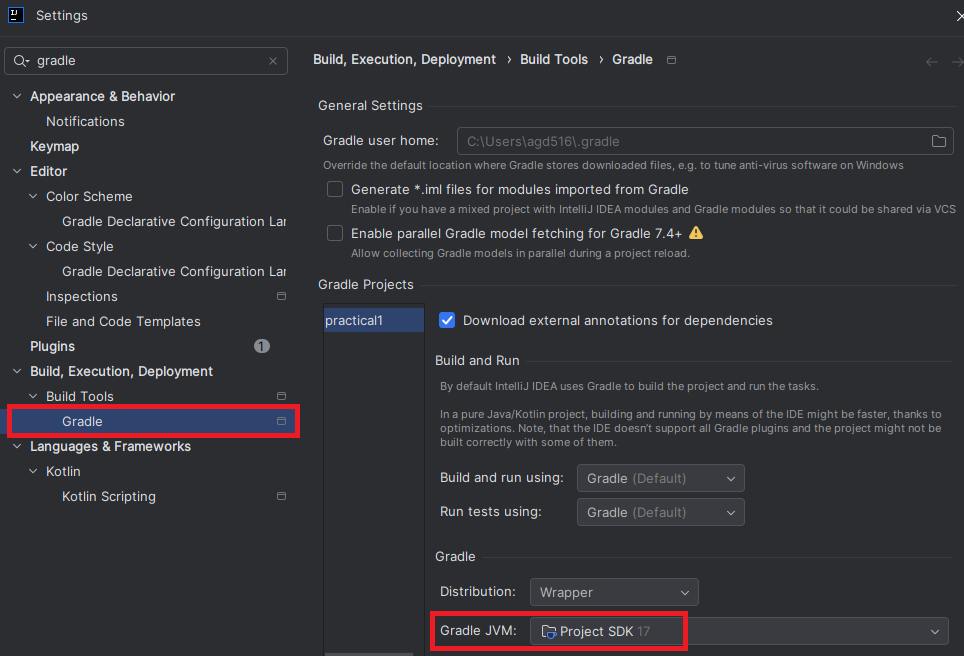

Click on OK, and then go to “File - Settings…” and search for Gradle on the left. Select the “Gradle” item inside the category for build tools, and ensure it uses the “Project SDK”, like this:

Adding your first endpoint

Micronaut projects are intended to implement the microservices at the “back-end” of your application.

A Micronaut project is made up of multiple controllers which respond to the HTTP queries sent by clients.

A controller is a Java class annotated with @Controller, where the methods are annotated according to the HTTP method being used (e.g. @Get, @Post, or @Put).

Micronaut may have already generated a BookMicroserviceController class for us as an example: to avoid any confusion, delete it.

Instead, we will create our own controller from scratch: typically you have one controller per resource to manage (e.g. books).

Writing the controller

First, create a resources subpackage within the main uk.ac.york.cs.eng2.books package, create a BooksController class in it, and annotate it with @Controller("/books"):

package uk.ac.york.cs.eng2.books.resources;

import io.micronaut.http.annotation.Controller;

@Controller("/books")

public class BooksController {

}

Note how the @Controller annotation takes a parameter, which is the common prefix to all the URLs handled by this controller.

In this case, the controller will handle all the URLs that start with /books.

We will then add a @Get method which will list the titles of the various books in our collection.

This is just to illustrate what it’s like to work with Micronaut: we will change it later to use an internal in-memory list that is populated with our requests (which we will upgrade in later weeks to a relational database).

@Get("/titles")

public List<String> getTitles() {

return Arrays.asList("title 1", "title 2");

}

As you can see above, the @Get annotation also takes a string, which is the additional suffix that makes up the whole URL being handled.

Trying out the controller via Swagger UI

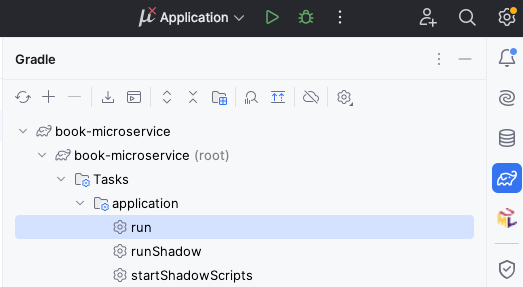

With this minimal amount of code, we can already try out the project. On the right side of IntelliJ, click on the Gradle icon (which looks like an elephant), find “Tasks - application - run”, and double click on “run”. If you have trouble finding it, see the screenshot below:

After some time, you will see a line like this:

13:07:12.346 [main] INFO io.micronaut.runtime.Micronaut - Startup completed in 249ms. Server Running: http://localhost:8080

This means that your Micronaut server is now ready to be tested.

To use the automatically generated web interface, visit http://localhost:8080/swagger-ui.

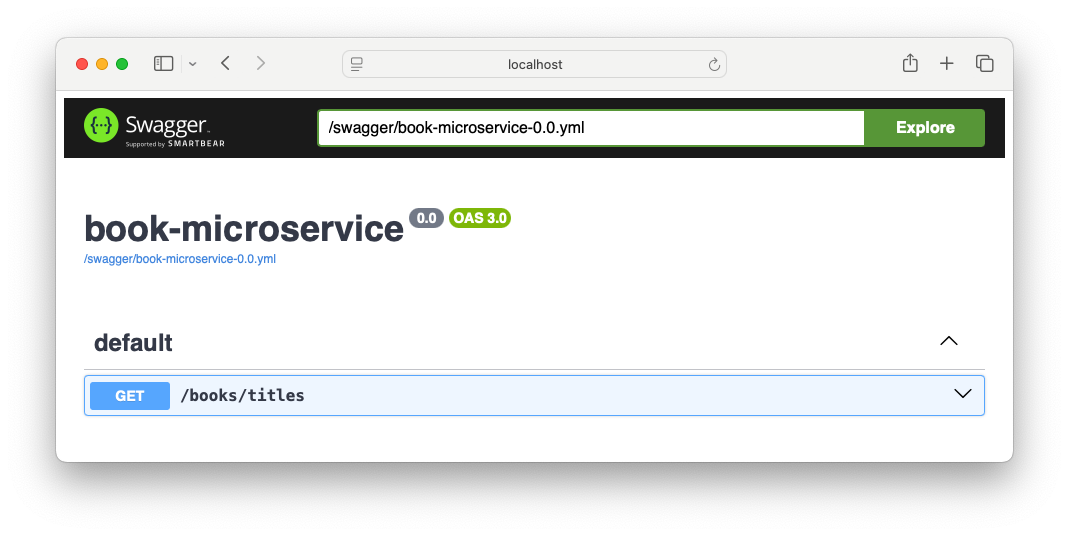

You’ll see an interface like this:

To try out your endpoint, expand the GET /books/titles element and click on the “Try it out” button.

You will then see an “Execute” button: click it to send the appropriate HTTP request.

It will look like this:

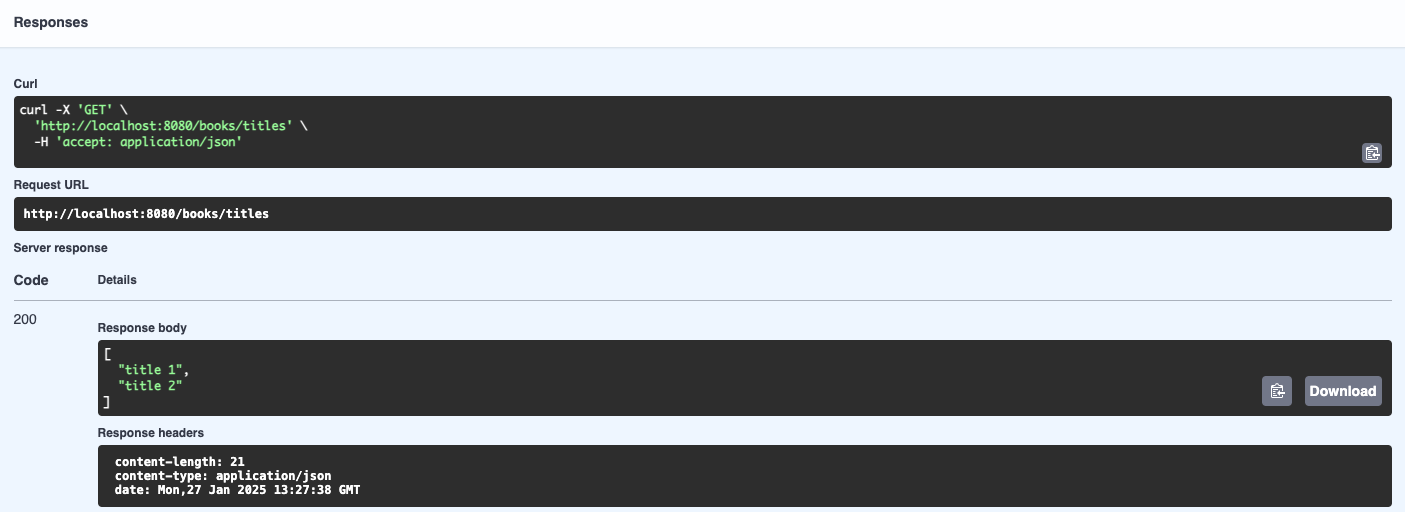

There are several parts here:

- The “Curl” section is a Unix terminal command that you can copy and paste to do the same HTTP request from outside a browser. In Windows, you can try this out from the “Git Bash” shell (Alt-right click on the desktop, and select Git Bash).

- The “Request URL” shows the full URL being targeted by our request: it combines the host and port, the

/bookspart from the controller, and the/titlesfrom the method. - The “Server response” section shows we receive an HTTP 200 status code (which means “OK”, i.e. successful response), and a JSON-formatted response with the two titles in our code. We can also read the various HTTP response headers that were produced by the server (length of the content in bytes, MIME type of the content, and the timestamp of the response).

Stop the program from IntelliJ, as we will now make some changes.

Sending Book objects

Rather than plain strings, we would like to send and receive all the information about a book in one go.

To do this, we will create a Data Transfer Object (DTO) class, which will be automatically turned to JSON by Micronaut so long as we annotate it as @Serdeable and follow certain conventions.

Writing the Book DTO

Note: the name Serdeable comes from “serialisable + deserialisable”.

Serialisation is the process of turning an in-memory object into a stream of bytes that you can send over the network or save into a file (e.g. by representing it as JSON).

Deserialisation is the reverse process of reading a stream of bytes and turning it into an in-memory object.

Create a new dto subpackage inside uk.ac.york.cs.eng2.books, and create a Book class inside it.

Annotate it with @Serdeable, to indicate to Micronaut that you want to serialise and deserialise it to/from JSON:

@Serdeable

public class Book {

}

For this example, let’s track the title and the full name of the author, and an integer id.

We will need to define them as properties to be serialised and deserialised.

There are several ways to do it (see the docs).

For this practical, we will use a pair of getter and setter methods.

For instance, to track the title:

- Add a

private String titlefield toBook. - Add a public

getTitle()method that returns the value of thetitlefield. - Add a public

setTitle(String newTitle)method that changes the value of thetitlefield.

Do the same for the author and the id.

Note: in IntelliJ, you can usually write just the fields, and then use “Code - Generate” to have it producer the getter and setter methods.

Using the Book DTO

Go back to our BooksController.

Rename the getTitles() method to getBooks(), change the @Get("/titles") to just @Get, and have it return a List<Book>.

Change the code so that it creates a few Book objects instead of just using strings.

Once you’re done with the changes, run the application as before, and try out the endpoint through the Swagger UI.

If you expand GET /books and scroll down to the “Example Value”, you’ll notice that it now shows a JSON object:

[

{

"id": 0,

"title": "string",

"author": "string"

}

]

Click on “Try it out” and then “Execute” to run your endpoint and check that it works as intended. You should get an HTTP 200 OK response like this one:

[

{

"id": 1,

"title": "Title 1",

"author": "Author 1"

},

{

"id": 2,

"title": "Title 2",

"author": "Author 2"

}

]

Managing books

Of course, there wouldn’t be much point in always responding with the same books: we want to be able to manage them.

To do this, we will need to add endpoints in our BooksController for creating, updating, and deleting books:

POST /booksshould accept aBookand add it to our collection.GET /books/idshould return theBookwith thatid.PUT /books/idshould accept aBookand update the title and author of the book with thatid.DELETE /books/idshould delete theBookwith thatid.

We will keep track of the books in a Java map, so they will be lost when we restart the application. We will cover how to store the books in a database in later practicals.

Adding books

The declaration of the controller method for POST /books would look like this:

@Post

public void createBook(@Body Book book) {}

Note how the method has a book parameter annotated with @Body: this indicates to Micronaut that the endpoint will deserialise a JSON-formattted Book object from the HTTP request body.

All the possible ways in which you can take parts of the HTTP request and bind them to variables are listed in the official Micronaut documentation.

Using the Java Collections Framework, add a map from integers to Book objects to your BooksController.

If you are unfamiliar with the JCF, consult the Java Collections Framework resources in the Java knowledge map of the VLE.

Implement the createBook method so it updates the map, and change the getBooks method so it returns the values of the map.

Try restarting the application, adding a few books through POST /books, and listing them through GET /books.

Getting a specific book

The next method will be for retrieving a specific book:

@Get("/{id}")

public Book getBook(@PathVariable int id) {}

@PathVariable is for binding a part of the path of the URL to a variable: specifically, the ID of the book that we want to fetch.

You can implement this method by simply returning the Book with that id, or null if we do not have it.

Try restarting the application. Try this:

- Add a book with

POST /books. - Check that

GET /books/{id}with the givenidreturns it, with an HTTP 200 OK response. - Check that

GET /books/{id}with anidthat we do not have produces an HTTP 404 Not Found response. This is because if a controller method returnsnullinstead of a DTO, Micronaut will map that to a 404 response.

Updating a book

You should be able to write the declaration of the method for PUT /books/{id} yourself, based on the above examples: just use @Put as the annotation for the method (with the appropriate string parameter).

Follow this approach:

- Get the

Bookwith the givenidin your map. If it does not exist, respond with HTTP 404: when your method does not simply return a DTO, you can instead throw anew HttpStatusException(HttpStatus.NOT_FOUND, message), where themessageis up to you. - Update the title of the book in your map.

- Update the author of the book in your map.

Note how this HTTP endpoint does not use the id inside the Book object sent in the request, but instead uses the id in the URL.

In fact, for update endpoints like these we would normally use a dedicated BookUpdateDTO that would not list id as a valid field.

Try it out in the Swagger UI before moving on.

Deleting a book

You should be able to write this method entirely yourself from the above examples, using the @Delete annotation on the new controller method, together with the appropriate string parameter.

Automated testing

Instead of trying out all our endpoints manually after every change, we’d like to have automated tests that they work as intended. Micronaut has specific facilities for helping with tests, while emulating real HTTP requests.

Creating the declarative HTTP client

In order to test all the steps that real requests would go through, our tests will send HTTP requests to our application, instead of directly calling the methods of the controller. To simplify that task, we will use the declarative HTTP client support in Micronaut.

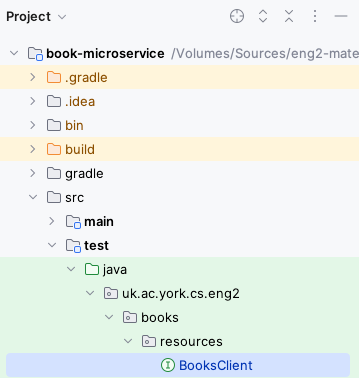

Within the test folder (which should have all the code for our tests, so we do not unnecessarily bundle it with a regular release), create a resources subpackage within uk.ac.york.cs.eng2.books.

Create a BooksClient interface in this same package.

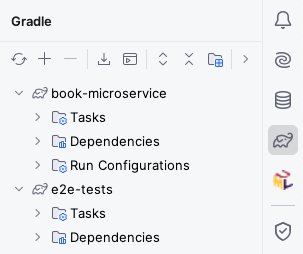

Your project should now look like this:

The interface should be annotated with @Client (using the same URL prefix as our @Controller), and have all the public methods of the BooksController, without their bodies.

It should look like this:

@Client("/books")

public interface BooksClient {

@Get

List<Book> getBooks();

@Post

void createBook(@Body Book book);

// ... rest of the public methods in your controller ...

}

Micronaut will automatically generate the code for the HTTP client based on this interface and its annotations.

Writing the first test

Since we will be testing the BooksController class, we will create a BooksControllerTest class in the same package as above.

Micronaut applications normally launch through the Application class that Micronaut Launch generated for you, as there is a certain process involved in their startup.

We need this startup process to happen before each of our tests as well.

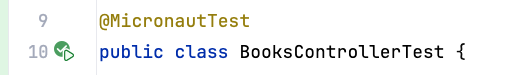

To do this, add the @MicronautTest annotation to your test class, like this:

@MicronautTest

public class BooksControllerTest {

}

For your tests, you will need the declarative HTTP client generated by Micronaut. Instead of creating the instance yourself, you should ask Micronaut to provide it to your tests by adding this inside your class:

@Inject

private BooksClient booksClient;

@Inject is one of several standard annotations for dependency injection: these are originally from the JSR-330 specification (starting with javax.inject), which were later renamed to jakarta.inject.

Micronaut supports a wide range of dependency injection mechanisms: here we use the simplest form of field injection.

Let’s add the most basic test one could imagine: if we ask for the list of books without having added anything yet, we should get the empty list. It would look like this:

@Test

public void noBooks() {

assertEquals(0, booksClient.getBooks().size());

}

Note: you may need to add import static org.junit.jupiter.api.Assertions.*; in order to have access to assertEquals and other JUnit assertions.

IntelliJ should recognise the class as a test class, and you should see an icon to the left of the public class BooksControllerTest line that you can click to run all the tests in the class.

This icon may change depending on the success or failure of your tests.

For instance, it looks like this after all tests have passed:

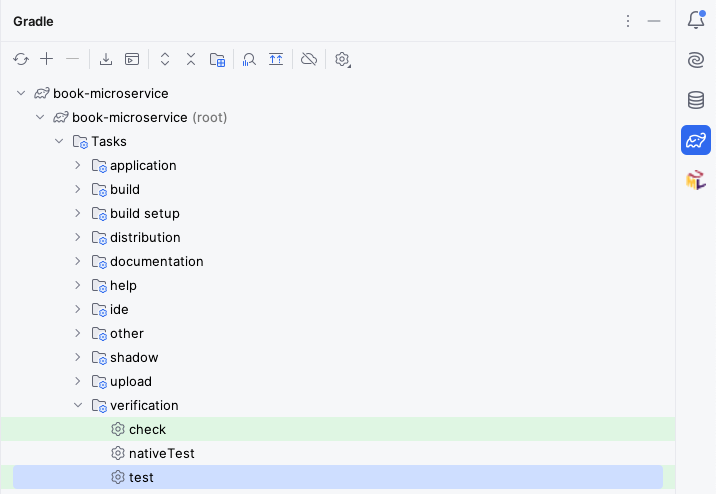

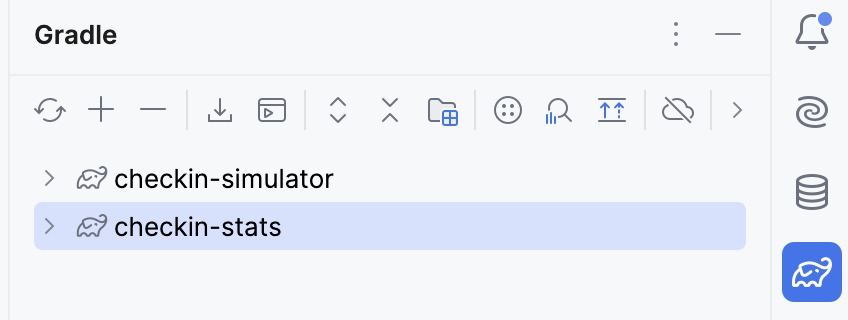

You can also run all the tests in your project by running the Gradle test task:

Your test should pass, and we can move on to the rest of the tests.

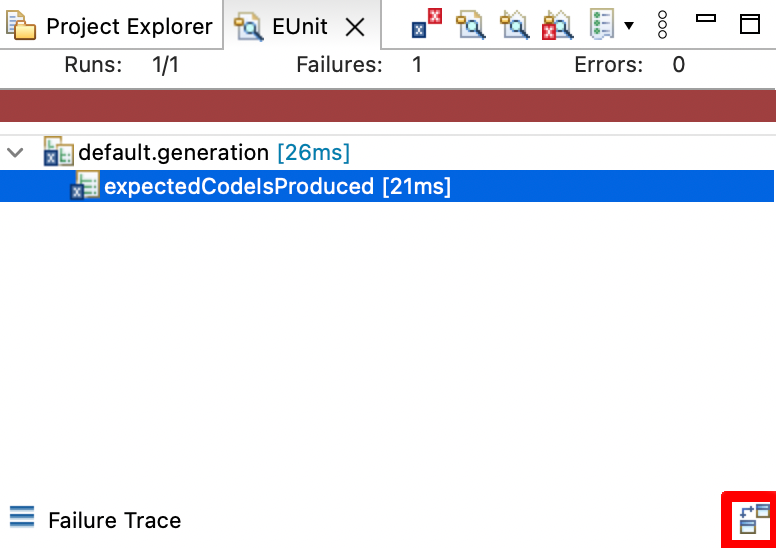

Measuring code coverage

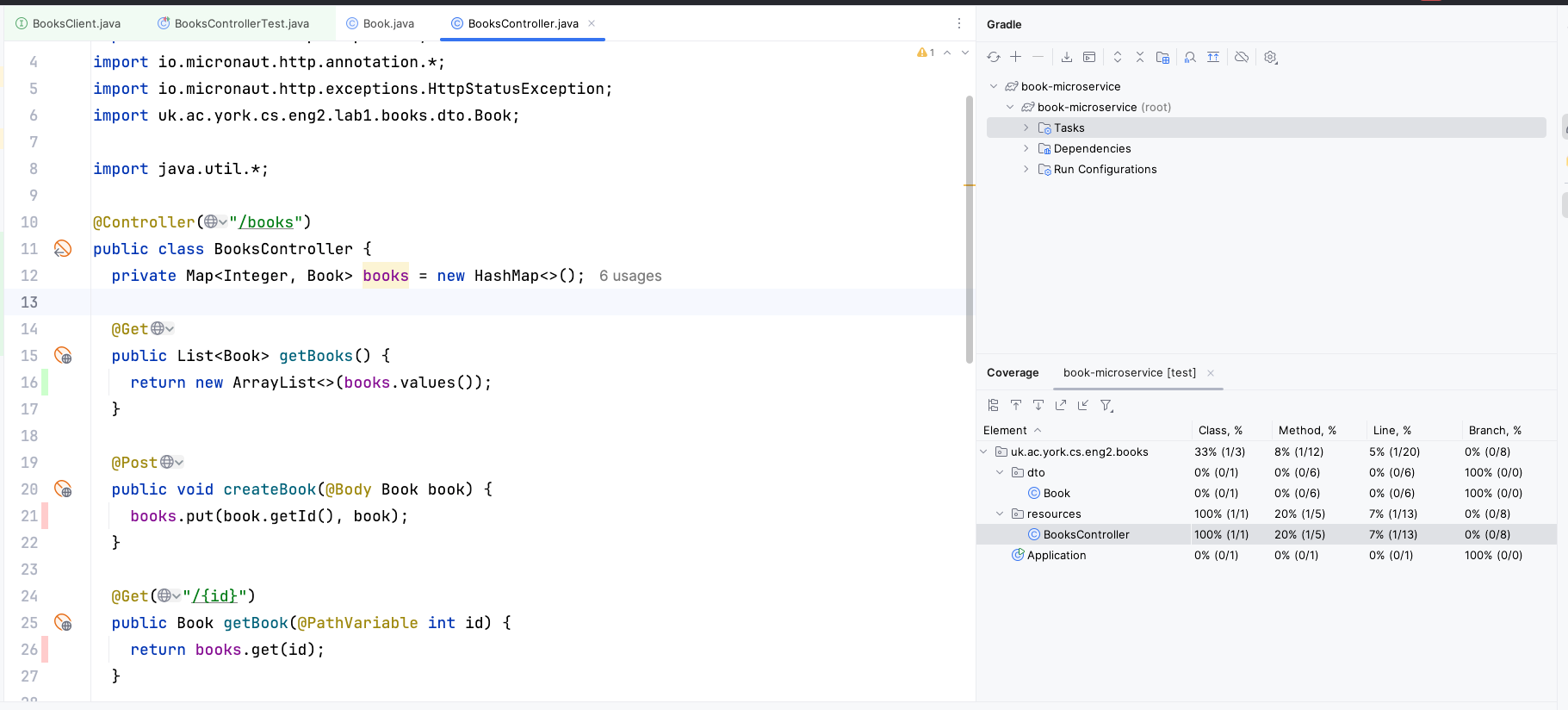

Obviously, there is still much to test. We should aim to have tests that cover all the important situations in our code. One way to find out what we are missing is to perform “coverage analysis”: you can do this from IntelliJ by right-clicking on the Gradle “test” task and selecting “Run with Coverage”.

You will get a report like this:

Here we have only covered 20% of the methods in the BooksController.

The lines we have missed are colored in red on the left: we have only covered getBooks() with our single test.

You may also see lines covered in yellow: this means that we only covered some of its branches (e.g. we only covered the if branch and not the else branch).

Adding the second test

Try adding a test which creates a book (via booksClient.createBook) and then gets all the books (via booksClient.getBooks).

If you run your tests again, you may notice that one of the two tests will fail.

This is because Micronaut is reusing the same BooksController object across tests, with the same underlying map from IDs to Books, so one test may interfere with the other.

To avoid this, change the @MicronautTest annotation to this:

@MicronautTest(rebuildContext = true)

public class BooksControllerTest {

// ... leave code as is ...

}

The rebuildContext = true option will make Micronaut recreate the controller for each test, so each test will start from a clean map from IDs to Books.

In a normal application, we would use a database instead of a Java map, and instead of rebuildContext = true we would have a @Before test setup method that would clean the database between tests: we will learn how do to it in later labs.

More tests for creating and updating books

Write test methods for each of these scenarios, and ensure they pass:

- Create a book, and then check that it can be retrieved by ID.

- Retrieve a book that doesn’t exist (assert that

getBook(...)returnsnull). - Create a book, and then update only its title.

- Create a book, and then update only its author.

- Create a book, and then delete it.

Testing deletion and updating of missing books

We still need to write test methods for these scenarios:

- Update a book that doesn’t exist.

- Delete a book that doesn’t exist.

However, there is a slight complication: 404 errors are not rethrown as exceptions from the Micronaut declarative client.

We were able to test the scenario where we try to fetch a missing book by asserting that the declarative client returned null instead of a Book object, but our updateBook and deleteBook methods simply return void, so we do not have anything to check in a JUnit assertion.

Go to the BooksClient declarative HTTP client interface, and change the return type of updateBook and deleteBook to HttpResponse.

Micronaut will then return the raw HTTP response, which will include the HTTP status code.

This will allow you to assert that an HTTP 404 Not Found response was produced with:

HttpResponse response = booksClient.updateBook(update, 23);

assertEquals(HttpStatus.NOT_FOUND, response.getStatus());

You should be able to write those two tests now.

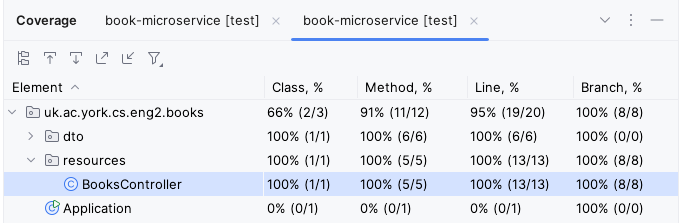

If you have written all your tests correctly, all the lines in BooksController should be highlighted in green, and you should be able to see 100% line, branch, and method coverage for that class:

Additional exercises

Congratulations! You have completed this first practical.

If you would like to gain more practice with the basics of building REST APIs with Micronaut, here are some things you could try.

For the harder exercises, we have an expanded model solution that covers some of them, in case you get stuck.

Enforcing ID uniqueness when adding books (easy)

POSTing a new book does not enforce that the ID is not already in use.

Add this logic, making it so trying to POST a Book whose ID is already in use produces an HTTP 400 Bad Request response.

Creating a DTO specific for updating (easy)

We use the same Book DTO for updating books, which allows for specifying an id that is not used.

Change the updating of books to use a dedicated BookUpdateDTO which only allows for title and author to be used.

This will make our expectations clearer to clients that use the Swagger UI or our generated OpenAPI definitions.

Managing authors (easy)

We only track books so far, but not their authors.

Create a new Author DTO and its own AuthorsController, plus the appropriate tests.

Relating authors and books (hard)

Extend Book and Author so they relate to each other: specifically, let’s assume that “a Book has at most one Author” and “an Author has zero or more Books”.

You will usually want to add a Set<Book> books field to Author, and an Author author field to Book.

Add endpoints to fetch the author of a book and the books of an author, and update existing endpoints to maintain their relationships. We suggest these:

GET /books/{id}/author: gets the author of the given book.POST /books: if the book specifies an author, make sure the author has been previously created, and add the book to the author’s set.PUT /books/{id}: if the updated book specifies an author, make sure the author has been previously created, and add the book to the author’s list. In addition, if the book had a different author before, remove the book from the old author’s set.GET /authors/{id}/books: gets the books of the given author.

You will need to annotate the books of an Author with @JsonIgnore, so they are not represented in the JSON version of an Author.

This is to avoid an endless loop where serialising an author serialises their books, which then serialise their author again.

You will need to refactor your code so the BooksController can check for the authors created so far.

There are several options:

- Use public static fields for the maps that hold the authors and the books. These are essentially mutable global variables now (which make testing and debugging harder).

- Add an

@Inject AuthorsController authorsControllerfield toBooksController, so it can ask the other controller for authors. - Move both maps to a new

ApplicationStateclass annotated as a@Singleton, and then@Injectit into both controllers.

You may notice that we have limited updating the Author-Book relationship to the Book side: this is to keep things simpler. In Practical 2, we will see this is a common practice when using databases as well: we would say that Book is the “owning” side of the Author-Book relationship as that is the side we make changes from.

Improving the generated OpenAPI specification (easy)

Micronaut automatically produces an OpenAPI description of your microservice, in YAML format. To improve its quality (making it easier to understand for humans and for things like ChatGPT function calling), you can do things like:

- Touch up the

@OpenAPIDefinitionannotation in yourApplication, as documented by micronaut-openapi. - Write better Javadoc comments for your controller methods.

Practical 2: ORM with Micronaut Data

This is the worksheet for the second practical in the Engineering 2 module.

Starting from a solution of Practical 1 (whether your own, or the model solution), you will change it to use a relational database via Micronaut Data.

Work through every section of the practical in sequence, without missing steps. You can use the “Previous” and “Next” buttons on the left and right sides to quickly go between sections.

Introduction

In this practical, you will take the microservice from Practical 1 and change it to use a database with Micronaut Data.

What you should already know

You should be able to:

- Implement basic Micronaut microservices that respond to HTTP requests (from Practical 1).

You should be familiar with these concepts from the lectures:

- The distinction between compute-intensive and data-intensive systems.

- The challenges related to the object/relational impedance mismatch.

- The differences between JDBC database drivers, ORM libraries, and Micronaut Data itself.

- The JPA annotations for persistent fields, one-to-many, many-to-one, and many-to-many relationships.

- The conventions followed by the methods in Micronaut Data

@Repositoryinterfaces. - The use of

@Transactionalto wrap invocations of controller methods in transactions.

What you will learn

- How to add Micronaut Data and Micronaut Flyway to an existing project.

- How to map a database schema to JPA annotations.

- How to query and update a database via Micronaut Data repositories.

- How to integrate the database into the tests of Micronaut microservices.

What you will need

- Java 17 or newer: install from Adoptium.

- An IDE with Gradle and Java support: in this worksheet, we discuss IntelliJ IDEA.

- A local installation of Docker Desktop.

Ensure Docker Desktop is running before you start this practical.

- Note: if using a lab machine, you will need to boot Windows as Docker Desktop is not installed in the Linux image.

If you have a recent installation of Docker Desktop (using Docker Engine 29 or newer), you will need to tell the Docker Java libraries to use version 1.44 of the Docker API, until this issue in Micronaut is fixed. From a Linux/Mac terminal, or from Git Bash in Windows, run this command:

echo api.version=1.44 > $HOME/.docker-java.properties

If you do not have Git Bash on Windows, you can run this from PowerShell instead:

"api.version=1.44" | set-content $HOME/.docker-java.properties -Encoding Ascii

What you will do

You will extend a solution of Practical 1 to use a database for persisting its data. This may be your own solution, or the model solution in the VLE.

Adding libraries

Before starting this section, you will need to have a solution to Practical 1. You can use your own, or you can start from the model solution of Practical 1.

What we need

We need to add a few things to our project from Practical 1:

- A JDBC driver: in this course we will connect to MariaDB databases.

- A connection pooling library: we will use HikariCP.

- A database migration framework: we will use Flyway.

- A database access toolkit: we will use Micronaut Data JPA.

- Micronaut Data JPA internally uses the Hibernate ORM.

Micronaut projects typically follow a modular structure, where you can add a number of features to it with support for various technologies. You already did this in Practical 1, by picking the features for OpenAPI generation and the Swagger UI. We will do the same here, but instead of creating a new project, we will be extending our existing project.

Updating our project

The easiest way to find out the changes we have to make in our project is to use Micronaut Launch.

Instead of generating a new project, we will instead produce a diff which will indicate the changes that we should make.

Open Micronaut Launch. Ensure the settings match those you used in Practical 1 (Java Version, Name, Base Package, Build Tool, and so on). Click on “Features” and select these elements:

mariadb: the JDBC driver for MariaDB.jdbc-hikari: the connection pooling library.flyway: the database migration framework.data-jpa: the Micronaut Data library in its JPA flavour.

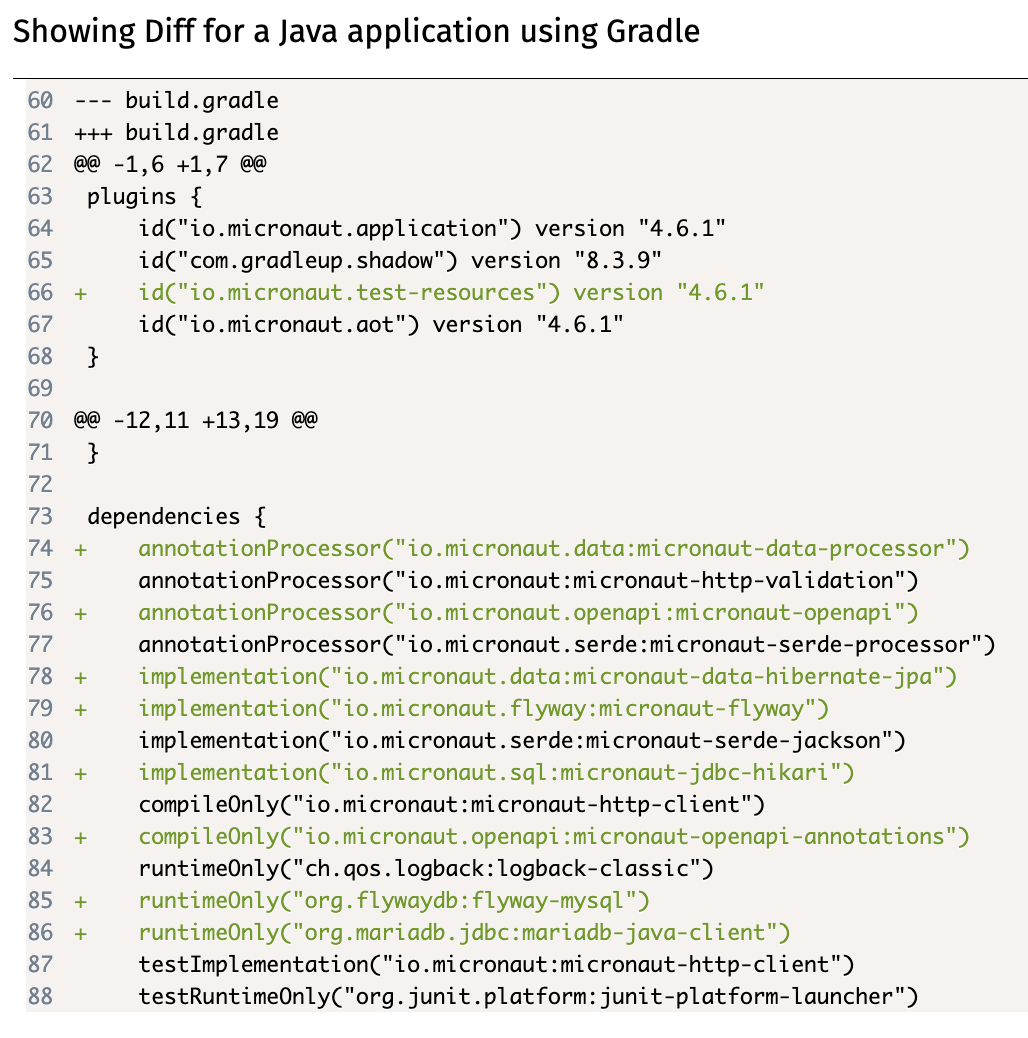

Click on “Diff”, and it will produce a patch in the unidiff format with the changes that would have to be made, like this one:

Unfortunately, this patch will often not be directly usable via automated tools (as we will have made customisations from the initially generated project), so we will have to make its changes manually. Here is a quick summary of the notation:

--- OLD_FILEand+++ NEW_FILErefer to the old and new locations of a file.OLD_FILEandNEW_FILEmay be the same if the file has not been moved.@@ -oldL, oldS +newL, newS@@start ahunkdescribing a change in the file. The hunk refers to the lines fromoldLtooldL+oldSin the old file, and to the lines fromnewLtonewL+newSin the new file.- Lines starting with

-are the old lines to be removed, compared to a default Micronaut configuration. - Lines starting with

+are the new lines to be added, compared to a default Micronaut configuration. - Lines starting with a space are just context to help understand the change.

If you read through the changes, you will see that you have to do the following:

- Optionally, add a few

featuresto the list inmicronaut-cli.yml, which match the ones you selected in Micronaut Launch. This is only used by Micronaut CLI, which is not used in the module: you may skip this. - Add the

io.micronaut.test-resourcesplugin to the list in yourbuild.gradlefile. - Add any dependencies you don’t have already to the

dependenciesblock in yourbuild.gradlefile. - Optionally, add some text to your

README.mdfile with some useful links to the relevant documentation. - Create a new

src/test/resources/application-test.propertiesfile with some content within your project. - Add a few lines to your

src/main/resources/application.propertiesfile.

When copying and pasting the new lines, remember to remove the initial + as that is just for indicating that it’s new text.

What do these changes mean?

The changes in the micronaut-cli.yml file are for integration with the Micronaut CLI, which we do not use in this module: you can skip them.

The changes in the build.gradle file make some important additions:

- They add the Micronaut Test Resources plugin to our Gradle build, which will use our local installation of Docker Desktop to automatically start anything we need for local development and testing (namely, MariaDB and later Kafka).

- They add the various Java libraries required to use Micronaut Data JPA with a MariaDB database.

As said above, the README.md changes are just some useful links that you can check while doing this practical.

The application-test.properties changes tell Hibernate that it should not try to automatically create tables in our database from our JPA-annotated classes, by setting the hbm2ddl.auto option to none. (hbm refers to Hibernate, and ddl refers to SQL Data Definition Language queries like CREATE TABLE.)

The application.properties changes do a few things:

- They indicate that the

defaultdata source is a MariaDB database, using theMYSQLdialect of SQL (used for the underlying JDBC connection), and specifying the fully-qualified class name of the MariaDB JDBC driver. - They turn on Micronaut Flyway for the

defaultdata source, so it will automatically apply all pending database migrations on startup. - Since we’re using Flyway, they also disable

hbm2ddlhere.

Ready to move on?

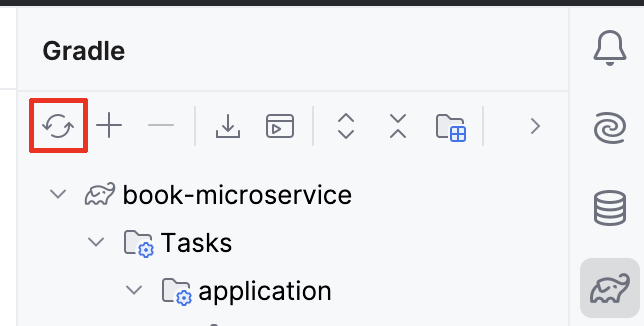

Once you’ve made all the changes in the patch produced by Micronaut Launch, ensure that your IDE reloads the Gradle project.

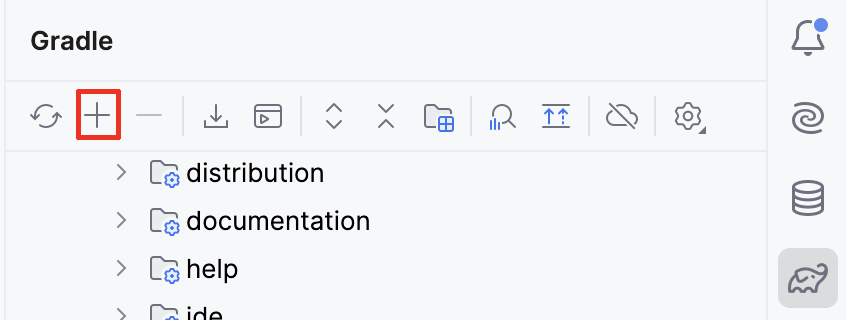

In IntelliJ, this can be done by pressing the “Reload All Gradle Projects” in the Gradle drawer:

Do not try to run the project yet, as we still have some changes to make.

Book entity

Let’s migrate our in-memory Books to database records.

We will create the first migration for our database, write the JPA entity, and migrate our codebase to use it.

Creating the database migration

We are using Micronaut Flyway to maintain our database schema over multiple releases of the microservice.

In this first release, we will create the hibernate_sequence sequence needed by Hibernate to compute primary keys for new objects, and the book table needed to store books.

In IntelliJ, create the src/main/resources/db/migration/V1__create-book.sql file.

The V1 is used to indicate that this is the first migration to be run, and then we have two underscores to separate the version number from the short description of the migration.

The file should have this content:

create sequence hibernate_sequence;

create table book (

id bigint primary key not null,

title varchar(255) not null,

author varchar(255) not null

);

Renaming the Book DTO class

We will be creating a Book domain class soon, so to avoid confusion we should rename our existing Book Data Transfer Object (DTO) class to BookDTO.

From now on, we will follow the convention of adding DTO at the end of the name of every DTO class.

You can do this by right-clicking on Book within the Project section of IntelliJ, selecting “Refactor - Rename” and entering BookDTO.

Do not rename similarly named fields or classes.

Creating the Book domain entity

We now need to create a JPA @Entity class that matches the book table.

Create a new domain subpackage within uk.ac.york.cs.eng2.books, and create a Book class within it.

If you remember from the lecture, mapping the book table to a JPA entity would require these steps:

- Annotate the class with

@Entityfrom thejakarta.persistencepackage. - The

idcolumn should be mapped to anidfield of typeLong, annotated as@Idand@GeneratedValue. - The

titlecolumn should be mapped to a field of the same name of typeString, annotated with@Column. - The

authorcolumn should be mapped in the same way astitle.

Add getter and setter methods for each of your fields. You can do this via the “Code - Generate…” menu item.

Creating the Book repository

We will need to perform a few common database queries, like listing the books, finding a book by its primary key, and so on.

Instead of writing SQL by hand, we will use a @Repository interface.

Create a new repository subpackage within uk.ac.york.cs.eng2.books, and create a BookRepository interface within it.

The interface should extend PageableRepository<Book, Long>, and should be annotated with @Repository from io.micronaut.data.annotation.

It will look like this:

@Repository

public interface BookRepository extends PageableRepository<Book, Long> {

}

Updating your controller

You should now revisit your BooksController, and make it use the repository instead of the Map of books it has now.

First, replace this:

private Map<Integer, BookDTO> books = new HashMap<>();

With this:

@Inject

private BookRepository repository;

This will make Micronaut automatically inject an implementation of BookRepository into your controller when the application starts.

You need to rewrite the various methods in the controller to answer the various requests through the methods in the repository. You will find these repository methods useful (there is a full reference on the Micronaut Data Javadocs):

repository.findAll()lists all the books.repository.findById(id)returns anOptional<Book>given an ID.Optional<T>is the type of aTvalue that may or may not be present. If you have anOptional<T> o, check first if it has a value witho.isPresent(): in that case, obtain the value viaget(), otherwise react accordingly (e.g. producing an HTTP 404 error response).repository.save(book)saves aBookto the database. If theBookhas an ID (which you should not set yourself: Micronaut Data will do it for you), it will perform an update of the row with that ID, otherwise it will insert a new row and return theBookwith its auto-generated ID set.repository.existsById(id)returnstrueif aBookexists in the database with that ID, andfalseif it does not.repository.deleteById(id)will delete a book from the database, given an ID.

While rewriting the methods, take these aspects into account:

- You could continue to use

BookDTOobjects for your requests and responses, or you could add@SerdeabletoBookand use it instead. DTO objects are normally used when you do not want to send or receive all the fields in the original entity, due to privacy, security, or bandwidth concerns. Domain objects can be used when you do want to send everything about the entity. - Again, you must not set the ID of a

Bookyourself: Micronaut Data will do it for you when you call the repository methods. - You will need to change the

@PathVariablearguments tolong, as the primary key ofBookis aLongvalue (since we usedbigintin the database schema). You will have to change the equivalent arguments in the declarative HTTP client interface you used for testing as well (BooksClient). - You will need a

@Transactionalannotation on the method you use to update an existing book, as you will need to first obtain the existingBook, then update its fields, and then save it. If you do not specify this@Transactionalannotation, you will get an error like this one:

This is because thedetached entity passed to persist: uk.ac.york.cs.eng2.books.domain.BookBookreturned byrepository.findById(id)would be immediately detached from its database session: without the@Transactionalannotation, each repository method call runs on its own separate transaction. The@Transactionalannotation ensures that all repository calls within the method are running as part of the same transaction.

Reporting the ID of the created books

We need to change the behaviour of the controller method responsible for creating books (createBook in the model solution), so that it lets the client know about the ID of the Book that it created, in case the client wants to make follow-up requests about the book (since the ID is auto-generated by the database).

You have two options:

-

The simplest option is to just return the

Bookthat was produced by therepository.save(book)call. ThisBookwill have the ID filled in. -

An alternative option is to return an

HttpResponsewith the appropriateLocationheader. This can be done with this code:return HttpResponse.created(URI.create("/books/" + book.getId()));This second style matches better the REST approach, as using the proper HTTP 201 Created status code with the Location of a newly created resource is part of Level 2 of the Richardson Maturity Model.

Regardless of which option you choose, make sure to update the declarative HTTP client interface in your tests (BooksClient) to match.

Updating our tests

We should now revisit our tests, as they will work from a database instead of working from an in-memory data structure.

Open the BooksControllerTest class.

Replace this @MicronautTest annotation:

@MicronautTest(rebuildContext = true)

With this annotation:

@MicronautTest(transactional = false)

This is needed so that the tests are not running within a transaction, as that would isolate them from the effects of invoking our microservice on our database (so they would not see any rows that were changed, for instance).

We need to clean the database between tests, to avoid any interference from one test to another.

Add this test setup code to your BooksControllerTest:

@Inject

private BookRepository bookRepository;

@BeforeEach

public void setup() {

bookRepository.deleteAll();

}

Next, you should change the tests so that they do not specify an ID when sending requests to create books (as the IDs will be automatically generated by Micronaut Data). You should only send the desired title and author, and then obtain the ID of the created book from the response:

- If you decided to return the created

BookincreateBook, you can callreturnedBook.getId(). - If you are producing an HTTP 201 Created response with a Location header, you will need to extract the ID from the header.

This could be done with code like this, which you may want to extract to a utility method:

HttpResponse<Void> createResponse = booksClient.createBook(b); Long bookId = Long.valueOf(createResponse.header(HttpHeaders.LOCATION).split("/")[2]);

If you have made all the necessary changes, ensure Docker Desktop is running, and then run your tests. Before moving on, ensure that all your tests are passing.

Automated database provisioning via Micronaut Test Resources

Launch the Gradle run task, and try out the endpoints in your microservice through the Swagger UI.

You should see them working as intended, as the tests passed.

Before stopping the application, consider one thing we haven’t done. We have not specified any database connection details, yet we have a database: how did that happen?

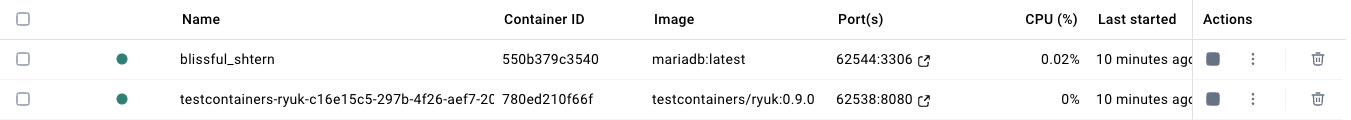

The answer is the Micronaut Test Resources Gradle plugin.

You may remember that we added it to our build.gradle file at the start of the practical.

This Gradle plugin sets up Micronaut so that if we do not specify a database connection URL, and the Micronaut application requires a database, it will automatically start a Docker container and connect Micronaut to it.

This is very useful for local development and testing, as we can forget about having to set up a database server ourselves!

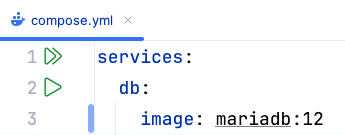

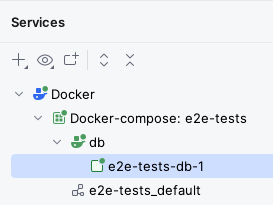

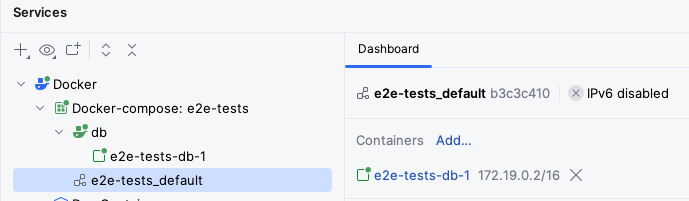

Try opening Docker Desktop while your application is running, and you should see something like this:

One of the containers uses the mariadb:latest image: this is the MariaDB server that Micronaut Test Resources has started for us.

This server will be automatically destroyed when we shut down the application.

You can also note that its Port is 62544:3306: this means that port 62544 in our local machine points to port 3306 inside the container (3306 is the default MariaDB port).

Logging SQL queries for inspection

In some cases, you may want to log all SQL queries being executed.

For example, to check if they may be running an inefficient query that could potentially take a long time.

To do this, add the following to your application.properties file:

# Logs all queries

jpa.default.properties.hibernate.show_sql=true

jpa.default.properties.hibernate.format_sql=true

Try restarting your application, and listing all books. You should see text like this in your IntelliJ console:

Hibernate:

select

b1_0.id,

b1_0.publisher_id,

b1_0.title

from

book b1_0

Warning

Do not use this in production! You should remove these logging lines once done, as otherwise they could reveal sensitive information in the logs, and they would significantly increase their size. They should only be used during development, and never in production environments.

(Optional) Integrated database client in IntelliJ Ultimate

Knowing the local port can be useful if we want to inspect the database ourselves. For instance, IntelliJ Ultimate (which you can get via their education program) comes with a convenient database client out of the box. On the right side of the window, click on the “Database” icon, which looks like three stacked cylinders:

Try creating a data source of type MariaDB, connecting to localhost on the port shown in your Docker Desktop window (note that this may differ from the above screenshot, and can change every time you run Micronaut).

Use test for the user and the password.

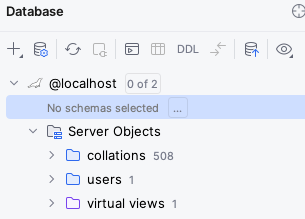

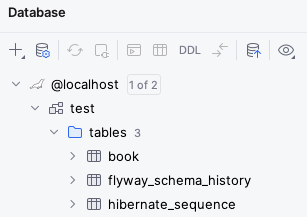

Once you connect, you will get something like this:

Click on the “…” next to “No schema selected”, and choose the test schema.

You should now be able to see all the objects in the database and query their contents:

You may notice that besides the book table and hibernate_sequence that we created in our .sql file, there is also a flyway_schema_history table.

This is an additional table that Flyway uses to keep track of which migrations have already run in the database, so that it doesn’t try to run them again if you were to restart the application.

For more information on the IntelliJ database client, check its official documentation.

1:N with Publisher

We created the Book entity that mapped the rows in our book table, and we created a controller that allowed for managing these Books via HTTP requests.

However, we also need to deal with other entities that may be related to books.

For instance:

- Each

Bookis published by aPublisher(a “many-to-one” relationship). - A

Publisherpublishes manyBooks (a “one-to-many” relationship).

In this section, we will practice with implementing such a relationship in our application.

Migrating the database

We need to add a Flyway database migration that will create the appropriate publisher table, and add the publisher_id foreign key to the book table.

Create a src/main/resources/db/migration/V2__add-publisher.sql file with this content:

# Creates the table for our publishers

create table publisher (

id bigint primary key not null,

name varchar(255) unique not null

);

# Adds the foreign key from a book to its publisher

alter table book

add publisher_id bigint references publisher (id);

Basic functionality for publishers

Create the Publisher entity in your application and its repository.

Add a controller that allows you to create, list, update the name, and delete its entries, by handling these HTTP requests:

GET /publishers: list all the publishersPOST /publishers: add a publisherGET /publishers/{id}: get a specific publisher by IDPUT /publishers/{id}: update the name of a publisher by IDDELETE /publishers/{id}: delete a publisher

As these steps will be the same as for Book, we will not provide detailed step-by-step instructions on how to do it.

Ignore the relationship between Book and Publisher for now.

Don’t forget to write the appropriate tests for it!

Adding the relationship to the entities

Let’s extend Book and Publisher so they know about each other.

First, add this to Book:

@ManyToOne

private Publisher publisher;

Generate the getter and setter methods (getPublisher / setPublisher) as usual in your IDE.

In addition, if your Book domain entity is @Serdeable, add @JsonIgnore to the new publisher field, as we want to avoid a scenario where we serialise the entire Publisher and all their books when we’re trying to send a single Book over the network.

@JsonIgnore means that the field will not be serialised via JSON.

Likewise, add this to Publisher, as well as the appropriate getter and setter methods:

@OneToMany(mappedBy = "publisher")

private Set<Book> books = new HashSet<>();

If you remember from the lecture, in bidirectional relationships like this one, there is one side that owns the relationship: in other words, the side that you should change - the other side is only for reading.

In the case of one-to-many + many-to-one relationships, the owning side is always many-to-one.

In other words, Book is the owner of this relationship.

The side that does not own the relationship (Publisher) indicates via mappedBy the name of the property on the other side that owns the relationship (in this case, publisher in Book).

Likewise, if you made your Publisher to be @Serdeable, add the @JsonIgnore annotation to your books field.

Before we move on, check that all your tests are still passing.

Extending the controllers

We want to add support for this Book->Publisher relationship to our controllers.

Specifically, we want to support these features:

POST /books/{id}should allow for specifying the publisher.PUT /books/{id}should allow for setting and unsetting the publisher of aBook.GET /books/{id}/publishershould return the publisher of aBook.GET /publishers/{id}/booksshould list theBooks of aPublisher.

You should be able to implement these yourself with what you have learned so far for the most part. There are a few things to take into account, though:

- For specifying the publisher while creating or updating a

Book, you may now need to create aBookCreateDTOwith a dedicatedLong publisherIdfield, asBookitself will not allow you to specify that information (since it will just have aPublisher publisherfield). - Fetching the publisher of a

Bookcan be done in two main ways:-

If you use

repo.findById(id)to get theBookand then usebook.getPublisher()to get thePublisher, you will need to add the@Transactionalannotation to the controller method so both queries will run in the same transaction. Otherwise, you may get an error message on thebook.getPublisher()call, asbookwill no longer be connected to a database session. -

Alternatively, you can add a custom query method to your

PublisherRepositoryand retrieve the appropriatePublisherin one call, like this one - we picked this name specifically so we’d obtain thePublisherthat has the givenidamong itsbooks:Optional<Publisher> findByBooksId(Long id);

-

- When fetching the

Books of aPublisher, you can follow two approaches:- Use a

@Transactionalcontroller method that first finds thePublisher, copiespublisher.getBooks()to a newList<Book>(to avoid any issues with lazy collections) which it then returns. - Use a custom query method in your

BookRepositorywhich fetches thoseBooks directly - again, we picked the name specifically to find theBooks whosepublisherhas the given ID:List<Book> findByPublisherId(long publisherId);

- Use a

When testing, consider that you will need to modify the @BeforeEach method so it deletes all the Books first, and then all the Publishers.

If you try to delete all the Publishers first, you may see errors as some Books may still be pointing at them.

Once you are done with the above functionality and your tests pass, move on to the next section.

M:N with Author

In the previous section, we added Publisher entities and a bidrectional one-to-many relationship between Book and Publisher.

In this section, we will promote the authors of a book to its own Author entity, and implement a many-to-many relationship between Book and Author.

Migrate the database

Create a new database migration script named V3__add-author.sql, with this content:

-- Authors

create table author (

id bigint primary key not null,

name varchar(255) not null

);

-- Join table between books and authors

-- Book owns the relationship, so the name is book_author, rather than author_book

create table book_author (

-- Name is (field name)_(primary key of referenced entity)

books_id bigint not null references book (id),

authors_id bigint not null references author (id),

-- Primary key of this table is the composite of the

constraint primary key (books_id, authors_id)

);

-- Migrate existing authors into author rows

insert into author

select nextval(hibernate_sequence), b.author

from (select distinct author from book) b;

-- Join up books and authors

insert into book_author (books_id, authors_id)

select book.id, author.id

from book join author

where book.author = author.name;

-- Drop the old author column

alter table book

drop column author;

This script creates the new table for our Author entity, and a join table between Book and Author as this is a many-to-many relationship.

You’ll notice that it also migrates the existing data into this new schema, and drops the old author column as that is no longer relevant.

This is an advantage of a database migration tool like Flyway: if we had deployed a previous version in production, this script would automatically migrate existing data during the upgrade. Obviously, migrating production data is a sensitive matter, so this migration would have to be carefully tested in your development environment first, and you would also want to do periodic backups and to perform a backup right before such a migration.

Update the Book entity

Remove the author field and its setter and getter from the Book entity, and correct any tests that may have been affected.

If you use DTOs for some of your Book-related requests or responses, remove any author fields from them as well.

Before moving on, ensure all your tests pass again.

Create the Author entity

Create the Author entity class, add the controller for it, as well as any appropriate tests.

Ignore the relationship between Author and Book for now.

Add the Book-Author relationship

Let’s add the bidrectional many-to-many relationship between Book and Author.

We will make Book own the relationship, so add this to Book, plus its getter and setter:

@ManyToMany

private Set<Author> authors = new HashSet<>();

As we said before, if you made your Book to be @Serdeable, you should add @JsonIgnore to the authors field so that it will not be part of its JSON serialisation by default.

Add this to Author, together with its getter and setter methods:

@ManyToMany(mappedBy="authors")

private Set<Book> books = Collections.emptySet();

You’ll notice that here were used mappedBy to indicate the name of the field in Book that owns this bidirectional relationship.

As said above, the mappedBy side is the one that does not own the relationship (it’s only for reading, not for modifying).

We can make the default value of the books field to be an unmodifiable empty set, so it will immediately complain if someone tries to modify it.

When we query the databse, our ORM will automatically replace this set with a lazily-loaded collection, which will be strictly limited to reading.

Extending the controllers

It’s time to expose this many-to-many relationship from our API. There are many different ways we could do it, but for this practical, implement these endpoints:

GET /books/{id}/authors: list the authors of a book.PUT /books/{id}/authors/{authorId}: add the given author to the book. Do nothing if the author is already listed on the book.DELETE /books/{id}/authors/{authorId}: remove the given author from the book. Do nothing if the author is not listed on the book.GET /authors/{id}/books: list the books of an author.

These are some aspects to take into account:

- To update the set of authors associated to a

Book, you only need to save theBookitself (i.e.repository.save(book)) after adding or removing the relevantAuthorfrombook.getAuthors(). The ORM will figure out what needs inserting and deleting. - Remember from the previous section that if you need to chain multiple queries (e.g. find a

Bookand then copy its authors to a newListto avoid issues around trying to serialise a lazily-loaded collection outside a database session), you will need to annotate the controller method as@Transactional. - You could use custom repository methods to directly find the

Authors by the ID of theBook, or find theBooks by the ID of theAuthor. Review the notes from the previous section for inspiration.

Additional exercises

Congratulations for getting this far! This has been a long practical, as we’ve had to touch upon a wide range of tasks around implementing an API to manage a set of entities in a database.

If you would like to go beyond this practical, here are some ideas you could try out.

Adding creation or update timestamps

Micronaut Data supports the @DateCreated and @DateUpdated annotations to automatically record the timestamps of when an entity was created or last updated.

Consult the Micronaut documentation and give this a try.

Adding new entity types and relationships

Consider adding another one-to-many or many-to-many relationship around your entities.

For instance:

Bookhas multipleGenres, which are also associated to multipleBooks- You can organise some

Books intoSeries(e.g. the Lord of the Rings books, or the Harry Potter books). You would have a one-to-many relationship fromSeriestoBook. How would you record the order of a givenBookin aSeries?

Adding by-name endpoints

You could add endpoints to find authors or books by name, such as:

GET /books/byName/{name}GET /authors/byName/{name}

This will require creating custom repository methods, e.g. findByName.

Adding paging

In a real application, you would never return all the records in one go. This is because you could overwhelm both the client and the server if you tried to send thousands of records in one go. Instead, a common strategy is to use pagination.

Have a look at the relevant section of the Micronaut Data documentation on how to do paginated queries, and consider changing your listing endpoints so they can accept an optional page number, like this:

@Get("/{?page}")

Page<Author> list(@QueryValue(defaultValue = "0") int page) { ... }

Note that page numbers start at zero for Pageable.

If you’d prefer page numbers to start at one in your API, feel free to make any necessary adjustments.

Practical 3: Integrating external services

This is the worksheet for the fourth practical in the Engineering 2 module.

We will start from a solution to Practical 2, and integrate an external service to fetch more information about the books. You will practice with using the Gateway pattern to encapsulate the details of accessing this external service.

Work through every section of the practical in sequence, without missing steps. You can use the “Previous” and “Next” buttons on the left and right sides to quickly go between sections.

Introduction

In this practical, you will extend the book-related microservice by adding an integration with the OpenLibrary APIs to fetch more information about the books that are added to the database.

What you should already know

You should be able to:

- Implement basic Micronaut microservices that respond to HTTP requests (from Practical 1).

- Query and update a relational database within a Micronaut microservice using Micronaut Data (from Practical 2).

You should be familiar with these concepts from the lectures:

- The differences between in-process and inter-process communication.

- How the Gateway pattern helps encapsulate the details of interacting with an external service.

- The main elements in an OpenAPI specification, and its use for generating clients.

What you will learn

- How to use the Micronaut OpenAPI Gradle plugin to generate a client from an existing specification.

- How to develop and test a Gateway for a specific external service (the OpenLibrary API).

- How to integrate the Gateway for improving the information you have about a book in the background.

What you will need

- Java 17 or newer: install from Adoptium.

- An IDE with Gradle and Java support: in this worksheet, we discuss IntelliJ IDEA.

- A local installation of Docker Desktop.

Ensure Docker Desktop is running before you start this practical.

- Note: if using a lab machine, you will need to boot Windows as Docker Desktop is not installed in the Linux image.

If you have a recent installation of Docker Desktop (using Docker Engine 29 or newer), you will need to tell the Docker Java libraries to use version 1.44 of the Docker API, until this issue in Micronaut is fixed. From a Linux/Mac terminal, or from Git Bash in Windows, run this command:

echo api.version=1.44 > $HOME/.docker-java.properties

If you do not have Git Bash on Windows, you can run this from PowerShell instead:

"api.version=1.44" | set-content $HOME/.docker-java.properties -Encoding Ascii

What you will do

You will start from a solution of Practical 2 (either yours, or our model solution) and work through the rest of the sections.

Client generation

Before starting this section, you will need to have a solution to Practical 2. You can use your own, or you can start from the model solution of Practical 2.

Obtaining the OpenAPI specification

We want to integrate an external service to have better information about our books. For this practical, we have chosen the OpenLibrary API, as they have an OpenAPI sandbox that we can experiment with.

As a first step, visit their sandbox (see link above), and download the openapi.json file linked from under the “Open Library API” header (the link in “Contribute by proposing edits to openapi.json”).

Move and rename the openapi.json file to src/main/openapi/openlibrary-0.1.0.json in your project.

Adding the Micronaut OpenAPI Gradle plugin

If we want to generate clients from the OpenLibrary OpenAPI spec, we will need to add the Micronaut OpenAPI Gradle plugin to our build.gradle.

Open your build.gradle file, and add this line to the plugins section:

id("io.micronaut.openapi") version "4.6.1"

Configuring the Micronaut OpenAPI Gradle plugin

We also need to let the Micronaut OpenAPI Gradle know about the OpenLibrary spec.

Within the micronaut section of the build.gradle file, add this block:

openapi {

client("openlibrary", file("src/main/openapi/openlibrary-0.1.0.json")) {

apiPackageName = "uk.ac.york.cs.eng2.books.openlibrary.api"

modelPackageName = "uk.ac.york.cs.eng2.books.openlibrary.model"

clientId = "openlibrary"

useReactive = false

}

}

Save the file and reload all Gradle projects as we did at the end of the first section of Practical 2.

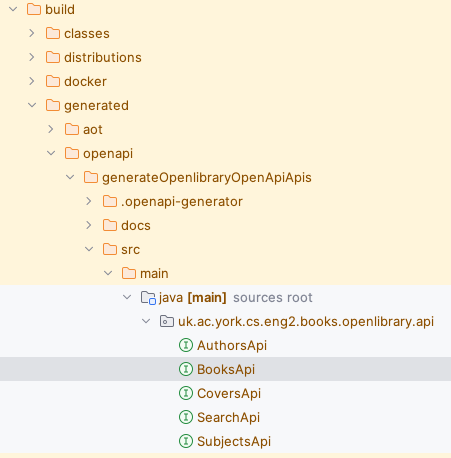

If you did it correctly, the “Gradle” drawer in IntelliJ should show a new “Tasks - micronaut openapi” folder.

Try running the build Gradle task.

You will see that a number of classes ending in Api were generated for us within the build/generated/openapi folder:

Specifying the base URL of the OpenLibrary API

You may notice that we specified a clientId in the openapi block.

This allows us to configure the generated client from the application.properties files.

Specifically, we’ll need to tell Micronaut how to access the OpenLibrary API.

Edit the src/main/resources/application.properties file, and add these lines:

micronaut.http.services.openlibrary.url=https://openlibrary.org/api

Examining the generated code

Have a look through the generated code. You may notice one oddity - the return types are all plain Objects, like this one from BooksApi:

/**

* {@summary Read Isbn}

*

* @param isbn (required)

* @return Successful Response (status code 200)

* or Validation Error (status code 422)

*/

@Get("/isbn/{isbn}")

Object readIsbnIsbnIsbnGet(

@PathVariable("isbn") @NotNull Object isbn

);

Unfortunately, it seems that at the moment the OpenAPI specification is manually written, and that they do not use schemas. We will have to write additional logic in our gateway that understands the format of the response.

Creating the gateway

Let’s say that we want to fetch additional information about a book by its ISBN from OpenLibrary.

We want to shield most of our application from the details of talking to OpenLibrary, so we will apply the Gateway pattern that we discussed in the lecture.

Adding the HTTP client to the regular dependencies

The build.gradle normally only includes the HTTP client for compilation and for testing.

We will need it for our regular implementation as well.

Remove these two lines from the dependencies of your build.gradle (they may be separate from each other):

compileOnly("io.micronaut:micronaut-http-client")

testImplementation("io.micronaut:micronaut-http-client")

Add this line to the dependencies:

implementation("io.micronaut:micronaut-http-client")

Reload all Gradle projects, since we changed the build.gradle file.

Designing the Gateway interface

Most of the application only needs to know that given an ISBN, we can get some additional information.

Let’s encode this in a new interface, within a new gateways subpackage:

public interface BookCatalogGateway {

Optional<BookCatalogInfo> findByIsbn(String isbn);

}

Create the BookCatalogInfo within the same package.

For this practical, we will keep it simple and just keep a List<String> of publisher names in it (with the appropriate getter/setter).

Writing the scaffold of the Gateway implementation

Create an OpenLibraryBookCatalogGateway class in the gateways subpackage which implements the BookCatalogGateway.

Annotate it with @Singleton so Micronaut will automatically create an instance of it and inject it wherever a BookCatalogGateway is requested.

Inject a BooksApi into OpenLibraryBookCatalogGateway.

You would now need to implement the findByIsbn method, using the readIsbnIsbnIsbnGet method in BooksApi.

For now, just write enough for the code to compile (e.g. just return Optional.empty()).

Designing a test case for the gateway

Given that the method only returns a raw Object, we’ll need specific logic in our gateway to cast this down to the correct type and fill in the BookCatalogInfo with the appropriate information.

It’s best to do this in a test-driven way.

Create an OpenLibraryGatewayTest test class in the appropriate package within src/test/java, and write a test that uses the gateway to fetch the information of some book in the Open Library by ISBN (for instance, 1524797162 is the ISBN of a videogame book).

It should have an assertion about having “Del Rey” as its sole publisher.

Try running the test: it should fail, as we don’t have a proper implementation of the gateway method yet.

Completing the Gateway implementation

It’s time to finish implementing the gateway method so our test passes.

The problem is that the readIsbnIsbnIsbnGet method in BooksApi only returns a raw Object: you’ll need to use the debugger to find out what is its actual type, and then cast it to the correct type to extract the desired information (the names of the publishers) and put it in the BookCatalogInfo.

You may find it useful to experiment with the OpenLibrary Swagger UI yourself and see what the response looks like.

In terms of ISBNs, the endpoint only takes ISBNs of books in the Open Library: for example, use 1524797162 (it’s the ISBN of a videogame book).

You’ll most likely need to use instanceof checks and cast down to Map<String, Object> and List<String> where needed.

You should return Optional.empty() if the response is not in the format if you expect or if an exception is thrown while invoking the OpenLibrary API: you may want to print an error message in those situations (in a production website, you’d log a warning of some kind).

This downcasting is obviously somewhat fragile, as OpenLibrary may decide to change the format of their response at any time, but at least all that is encapsulated in your gateway, and you have a test to automatically detect if they have changed the API in a breaking way.

Integrating the gateway

Now that we have the gateway and a test for it, let’s integrate it into the application.

In this practical, when someone adds a book with an ISBN, we will immediately request the additional information from OpenLibrary and use it.

This simple approach has its advantages and disadvantages:

- Good: it’s the simplest to implement.

- Good: the book record will be complete by the time we respond to the addition of a book.

- Bad: it adds latency to the processing of the request.

- Bad: OpenLibrary may be down while someone is trying to add a book. If we are not careful, users may see error messages while trying to add books just because of that.

In the next practical, we will show you how to postpone this work to a background process, to reduce latency.

Adding ISBNs to books

Create a database migration script called V4__add-isbn.sql, with this content:

alter table book

add isbn varchar(13);

Add the isbn field to your entities and DTOs as needed, and update any controllers and their tests accordingly (e.g. setting the ISBN while creating and updating a Book).

Using the gateway from the controller

Inject a BookCatalogGateway into your BooksController.

Update the controller so that if a publisher is not explicitly mentioned but an ISBN is provided, the controller will try to use the gateway to obtain the names of the publishers.

If one or more publishers are found, the controller should try to reuse the Publisher in the database whose name matches the first publisher mentioned by OpenLibrary, or create a new one if it does not.

Testing the controller’s use of the gateway

You will need to update the BooksController tests to cover the use of the gateway.

To avoid having the unit tests depend on an external service, use a method annotated with @MockBean to return a Test Double of the connection object used by the gateway (the BooksApi), which always provides the same response.

You can use Mockito to quickly implement that Test Double, instead of having to write a full implementation of the interface.

Remember to add Mockito to your build.gradle first, as we did on Practical 3.

For our case, it could look like this:

BooksApi mock = mock(BooksApi.class);

when(mock.readIsbnIsbnIsbnGet(any())).thenReturn(

Map.of("publishers", Collections.singletonList("P Ublisher"))

);

The any() call uses Mockito’s argument matchers to indicate that the mock will always return the same predefined response for any ISBN.

Once your tests pass again, continue.

Trying the gateway from the Swagger UI

Start the application via the Gradle run task, and use the Swagger UI to try an add a book with an ISBN listed in OpenLibrary.

You may notice that it does not populate the Publisher as you expected.

If you look at the messages coming from your Micronaut application, you will see something like this:

io.micronaut.http.client.exceptions.HttpClientException:

You are trying to run a BlockingHttpClient operation on a netty event loop thread.

This is a common cause for bugs: Event loops should never be blocked.

You can either mark your controller as @ExecuteOn(TaskExecutors.BLOCKING), or use the reactive HTTP client to resolve this bug. There is also a configuration option to disable this check if you are certain a blocking operation is fine here.

The error is self-explanatory, but the basic idea is that the controller methods are invoked on a loop that receives every incoming HTTP request. Anything that takes too long (like invoking an external service) could result in the event loop not being able to handle requests at a consistent pace.

As the error says, you should add @ExecuteOn(TaskExecutors.BLOCKING) to your controller class, and restart the application.

It should work now as expected: the event loop will dispatch the processing of each request to a separate thread pool, meaning that it will not be slowed down by a particular request taking longer than the others.

Additional exercises

Congratulations on completing this practical!

We hope that you found it interesting to use the Gateway pattern and use it from a controller.

Here are some ideas on things you could try to expand upon what we have covered in this practical.

Enable caching in the gateway

To reduce the load imposed on OpenLibrary from our application and improve its responsiveness, it may be good to adopt Micronaut Cache to automatically cache the results from our gateway.

For inspiration, you may want to check the example mentioned in the lecture slides for service integration.

It should be a matter of adding the library, adding some configuration to your application.properties, and adding the appropriate @Cacheable annotation to the right method in your gateway implementation.

Use the Circuit Breaker pattern

You may have noticed that the example in the service integration lecture also uses the Micronaut Retry library to implement the Circuit Breaker pattern.

This pattern would be useful if OpenLibrary had intermittent problems.

The @CircuitBreaker annotation implies a number of changes in behaviour:

- There will be a few retries when making the original request, instead of a single one.

- If several errors are observed in a given period of time, the “circuit breaker” will go into a “closed” state and all requests will immediately fail. This has several benefits:

- The application will be more responsive, as it won’t be waiting every time for a failing service.

- The failing service will see a reduction in its load, which will make it easier for its administrators to bring it back up.

- After some time, the “circuit breaker” will go to a half-open state, in which it will attempt to make a real request again. If that works, it will go back to an “open” state where requests go to OpenLibrary as usual. Otherwise, it will go back to the above “closed” state and wait for some time before trying again.

Since you cannot directly change the generated OpenAPI client (BooksApi), you may need to apply the @CircuitBreaker interface to a new subinterface of BooksApi that you would create yourself.

Use more information from OpenLibrary

You could try to pull in more information from OpenLibrary. For instance, you could get the title as well, or the authors.

Practical 4: Reacting to events

This is the worksheet for the third practical in the Engineering 2 module.

We will give you the code for a simulator of the check-in desks in an airport, and you will create an additional Micronaut project that will consume its events through Micronaut Kafka to produce a number of reports about the current state of the airport.

Work through every section of the practical in sequence, without missing steps. You can use the “Previous” and “Next” buttons on the left and right sides to quickly go between sections.

Introduction

In this practical, you will write a microservice that will consume the events from a simulated airport check-in area, and produce various reports about the check-in desks and the events so far.

To do so, you will use Micronaut Kafka.

You will also revisit the solution from Practical 3 and rework it to fetch information in the background, to reduce the latency of requests to add books. To do so, we will use Kafka consumers and producers to decouple the processing of book requests from the use of the external API.

What you should already know

You should be able to:

- Implement basic Micronaut microservices that respond to HTTP requests (from Practical 1).

- Query and update a relational database within a Micronaut microservice using Micronaut Data (from Practical 2).

- Calling other microservices from your own microservice (from Practical 3).

You should be familiar with these concepts from the lectures:

- The main components in an event-driven architecture.

- The core concepts in Apache Kafka: cluster, broker, topic, partition, record, and the structure of a record (key, message body, and a timestamp).

- The challenges around achieving durable and scalable stateful event processing, and common strategies (partitioned state, re-keying).

- The use of interaction-based testing (Mockito) for checking the correct use of Kafka producers.

What you will learn

- How to create a project that uses Micronaut Kafka.

- How to automatically create topics while starting a Micronaut microservice.

- How to implement Kafka producers and consumers using Micronaut Kafka.

- How to test Kafka producers and consumers using JUnit.

What you will need

- Java 17 or newer: install from Adoptium.

- An IDE with Gradle and Java support: in this worksheet, we discuss IntelliJ IDEA.

- A local installation of Docker Desktop.

Ensure Docker Desktop is running before you start this practical.

- Note: if using a lab machine, you will need to boot Windows as Docker Desktop is not installed in the Linux image.

If you have a recent installation of Docker Desktop (using Docker Engine 29 or newer), you will need to tell the Docker Java libraries to use version 1.44 of the Docker API, until this issue in Micronaut is fixed. From a Linux/Mac terminal, or from Git Bash in Windows, run this command:

echo api.version=1.44 > $HOME/.docker-java.properties

If you do not have Git Bash on Windows, you can run this from PowerShell instead:

"api.version=1.44" | set-content $HOME/.docker-java.properties -Encoding Ascii

What you will do

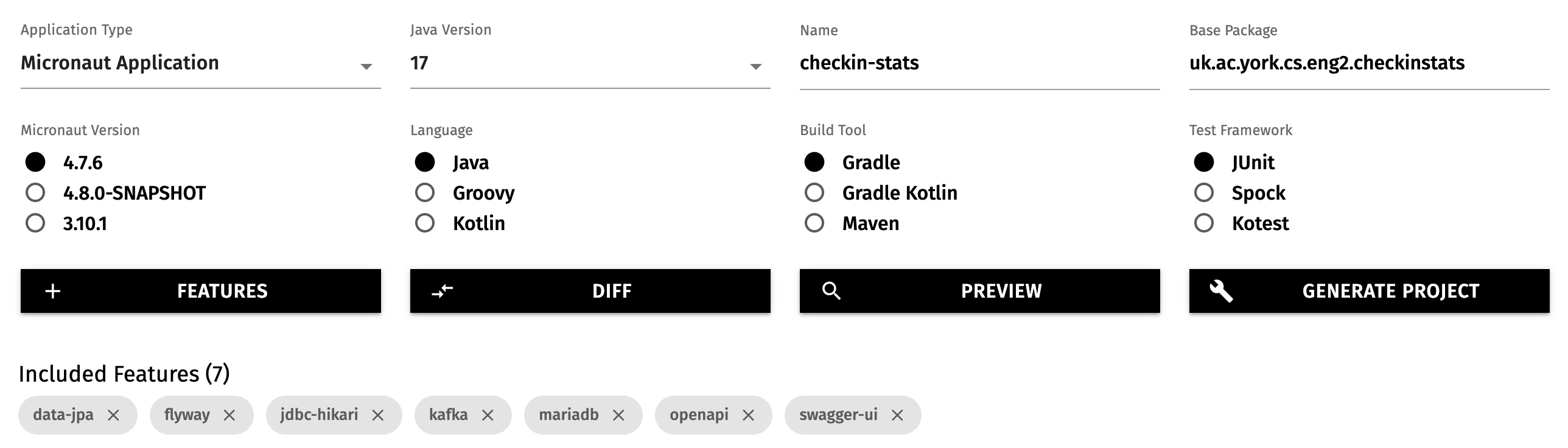

You will create a new Micronaut project that will consume the events from this simulator (also written as a Micronaut application).

You will also revisit your solution to Practical 3 (or its model solution) to move the invocations to OpenLibrary to a background process.

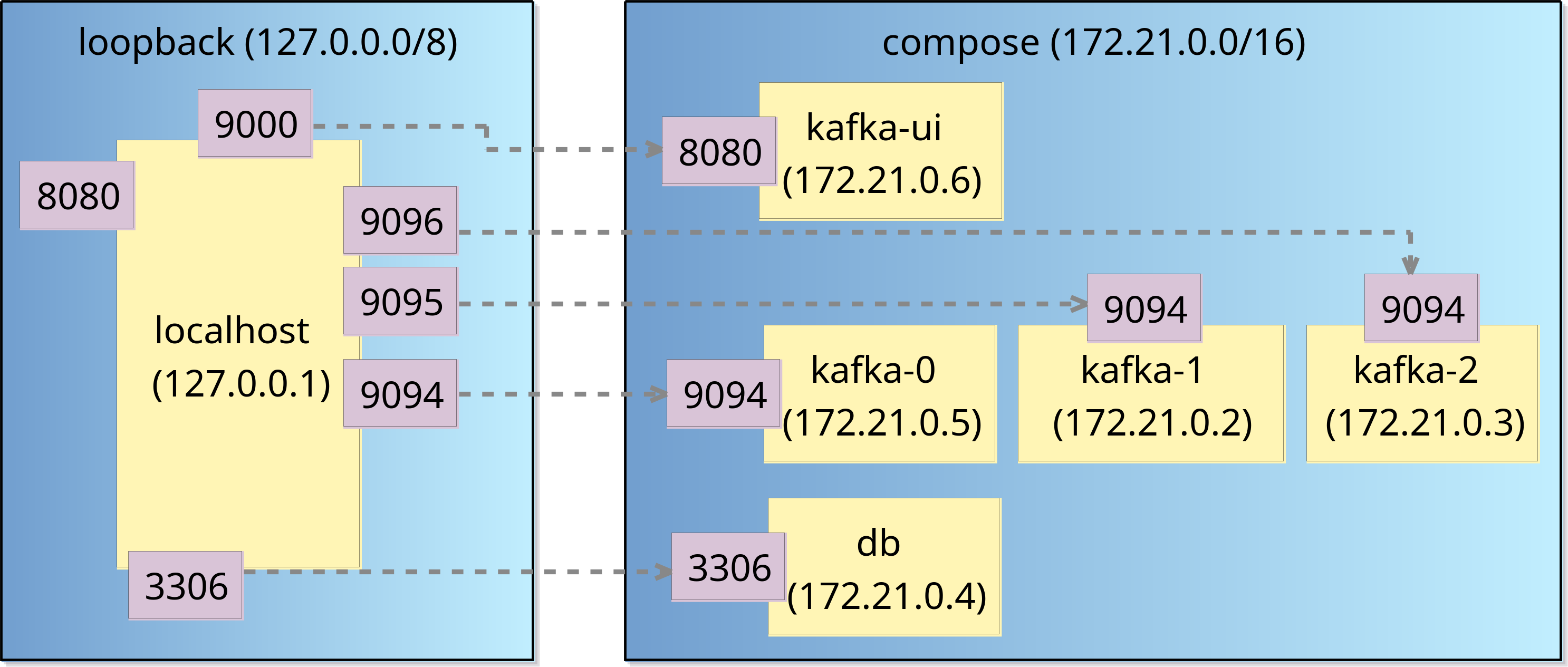

Creating the project